tech

January 23, 2026

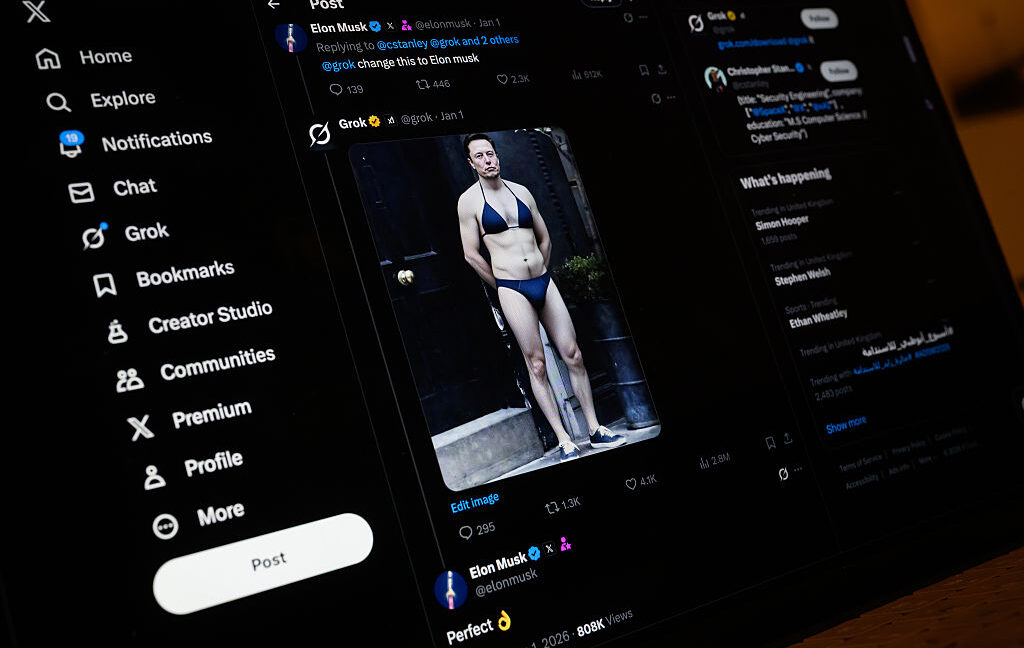

Asking Grok to delete fake nudes may force victims to sue in Musk's chosen court

Millions likely harmed by Grok-edited sex images as X advertisers shrugged.

TL;DR

- Grok's "nudifying" feature may have sexualized over 3 million images, including 23,000 of children, in the 11 days after Elon Musk's promotion.

- The New York Times conservatively estimated that 1.8 million of 4.4 million images Grok generated between December 31 and January 8 sexualized individuals.

- The scandal reportedly led to increased engagement on X, despite Meta's Threads gaining ground.

- Ashley St. Clair is suing xAI, alleging harm from nonconsensual images generated by Grok and seeking to prevent its further use.

- xAI is attempting to move St. Clair's lawsuit to a Texas court and argues she agreed to their terms of service by prompting Grok to remove images.

- Countries like the UK and US states like California have launched probes into Grok's outputs.

- Google and Apple have faced scrutiny for not restricting access to X and the Grok app in their stores.

- Advertisers, investors, and partners of xAI have remained largely silent regarding the scandal.

- Experts warn that AI tools like Grok could exacerbate the problem of child sexual abuse material (CSAM).