tech

February 5, 2026

Why your AI output feels generic (it's not your prompting) + 4 prompts to fix it plus an AI customization guide

The complaint about AI output is always the same: it’s fine. Helpful. Competent. Polite. You can’t point to an error. But when you read the response — really read it, not just scan — it doesn’t feel like it was written for you. The career advice applies to someone in roughly your situation but not your actual situation. The code works but doesn’t match how your team builds. The restaurant recommendations hit the tourist spots, not the places you’d actually like.

TL;DR

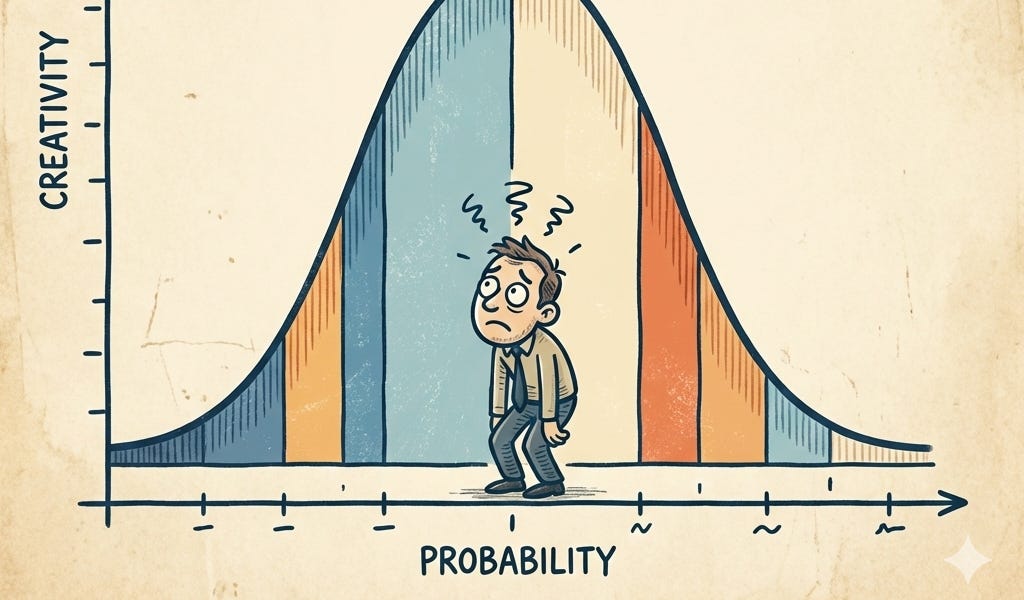

- AI output feels generically fine because models are optimized to satisfy a hypothetical typical user, not an individual.

- The training process, RLHF, leads to responses calibrated to a statistical center rather than a specific user's context.

- Better prompting improves AI experience but doesn't fix the underlying issue of generic optimization.

- Four mechanisms beyond prompting—memory, instructions, tools, and style controls—are being developed to escape generic AI output.

- Deliberate use of these mechanisms over time leads to compounding corrections and accumulated context, creating significantly personalized results.

- The article will explore why AI is 'averaged,' the four personalization levers, the compounding effect of steering, the boundaries of personalization, and how to run a steering audit.

Continue reading the original article