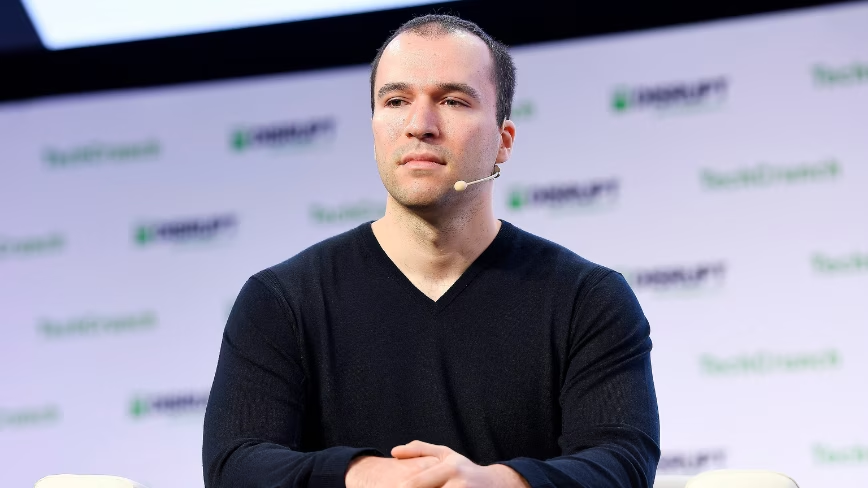

OpenAI president Greg Brockman is widely quoted as saying that artificial intelligence now writes about 80% of OpenAI’s code, up from earlier estimates closer to 20%, and that AI coding tools have moved from a minor assistant role to doing the bulk of code generation. Both AI and Human coverage agree that this comment reflects a broader shift in software engineering workflows toward AI-assisted development, and that comparable claims have been made by leaders at other major AI companies, such as Google and Anthropic, about the growing share of AI-generated code. Reports converge on the basic numerical claim (around 80%), the attribution (Brockman and OpenAI), and the domain (internal software code and developer workflows), and situate the statement within OpenAI’s current product ecosystem of code-generating models and tools.

Both perspectives also agree that, despite high levels of AI-generated code, humans remain ultimately responsible for reviewing, testing, and merging changes into production systems, and that organizations are emphasizing guardrails rather than full autonomy. Coverage consistently notes that Brockman’s remarks fit into a wider pattern of tech executives describing AI as transforming productivity and changing the nature of software work, while still requiring human oversight. Shared context includes the rapid evolution of coding assistants, the competitive landscape among leading AI labs, and the labor-market implications for software engineers, framed as a transition toward higher-level design, verification, and integration tasks rather than a total replacement of human developers in the near term.

Areas of disagreement

Credibility of the 80% figure. AI sources tend to present Brockman’s 80% claim at face value, often highlighting it as evidence of dramatic productivity gains and a milestone in AI capability. Human coverage is more skeptical, juxtaposing the claim with independent studies that show little to no measurable productivity impact at scale and questioning how the 80% is calculated. While AI narratives frame the number as indicative of a near-term future of AI-dominant coding, Human narratives stress the lack of transparent metrics and warn against treating an executive sound bite as empirical fact.

Framing of developer roles. AI sources generally portray the shift as a positive evolution where engineers happily move from writing boilerplate to higher-level design, code review, and problem framing, implying a mostly seamless transition. Human coverage is more ambivalent, acknowledging potential upskilling but also raising concerns about degraded skills, overreliance on AI-generated patterns, and the risk that junior engineers may lose opportunities to learn by doing. AI narratives often emphasize empowerment and creativity, whereas Human narratives mix that with anxiety about deskilling and opaque dependencies on proprietary tools.

Labor-market implications. AI coverage commonly hints that such high levels of AI-generated code herald major efficiency gains that could reshape staffing needs, sometimes implying that fewer engineers may be needed for the same output. Human coverage dwells more on the tension between promised productivity and actual organizational outcomes, asking whether claims like Brockman’s may be used to justify hiring slowdowns, role consolidation, or pressure on wages. Where AI-aligned accounts focus on aggregate productivity and innovation, Human reporting more directly interrogates job security, bargaining power, and the distribution of benefits.

Positioning within the AI race. AI sources often present the 80% claim as part of a success narrative in which OpenAI is leading a competitive race to integrate AI into internal workflows, mentioning peers like Google and Anthropic mainly as validating comparators. Human coverage more explicitly situates Brockman’s remarks within marketing and competitive optics, interpreting the number as both a performance claim and a signaling move to investors, regulators, and enterprise customers. AI narratives lean toward celebrating OpenAI’s internal adoption as proof of confidence in its tools, while Human narratives highlight the strategic incentives behind publicizing such an aggressive adoption figure.

In summary, AI coverage tends to embrace Brockman’s 80% claim as strong evidence of transformative productivity and a largely positive evolution in software work, while Human coverage tends to treat it as a strategic, partly promotional statistic that warrants skepticism, closer measurement, and attention to labor and power dynamics.