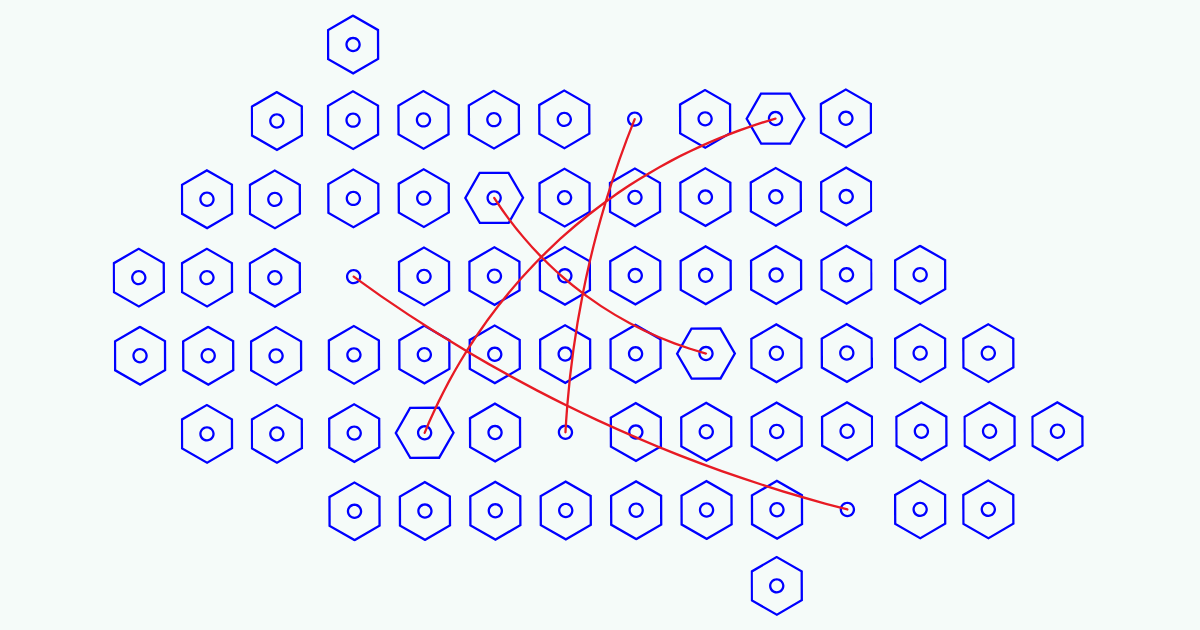

AI and human-aligned coverage agree that the core factual development is Jina AI’s detailing of how submodular optimization can be applied to problems of selecting optimal subsets of text and passages in large language model workflows. Both perspectives acknowledge that the work is framed around systems like DeepResearch, where many candidate queries, passages, or documents must be generated and then filtered down to a smaller, higher-value subset. There is shared recognition that the described applications explicitly include text selection, passage reranking, and context engineering as distinct but related use cases, all leveraging submodular functions’ ability to balance relevance with diversity in an efficient, theoretically grounded way.

They also concur that this coverage represents a continuation of prior work by Jina AI on submodularity, moving from earlier fan-out query optimization in DeepResearch to a broader set of downstream tasks in information retrieval and LLM prompting. Both perspectives note that the institutional setting is an applied AI company working at the intersection of search, retrieval-augmented generation, and agentic research tools, and that the motivation stems from the growing scale of candidate information LLM systems must handle. There is shared context that submodular optimization comes from a mature body of combinatorial optimization research and is being repurposed here to make LLM-centric pipelines more sample-efficient, less redundant, and more controllable as these systems are deployed in real-world, production environments.

Areas of disagreement

Scope and maturity. AI sources present Jina AI’s work as a concrete, implemented set of techniques directly improving DeepResearch and related pipelines, emphasizing practical readiness and near-term utility, while human coverage tends to infer that these ideas are still largely conceptual and exploratory, without independent verification or broad benchmarks. AI accounts stress that text selection, passage reranking, and context engineering pipelines are already structured around explicit submodular objectives, whereas human commentators frame them as promising prototypes that may require further empirical validation and comparison to standard rerankers.

Innovation framing. AI coverage often characterizes Jina AI’s use of submodular optimization as a significant methodological advance that differentiates DeepResearch from more naive top-k or heuristic approaches, foregrounding the theoretical guarantees of submodularity. Human coverage, by contrast, is more likely to place this work within a continuum of long-standing diversity and coverage methods in information retrieval, suggesting the approach is an intelligent adaptation of known theory rather than a radical breakthrough.

Implications for LLM reliability. AI narratives highlight these submodular techniques as a way to systematically reduce redundancy, improve coverage of relevant evidence, and thereby increase the reliability and depth of LLM-based research workflows. Human discussions tend to be more reserved, acknowledging potential gains in retrieval quality but questioning how much this alone can mitigate broader LLM issues like hallucinations, reasoning errors, or biased sources, and often calling for more end-to-end evaluations before crediting submodularity with major reliability improvements.

Accessibility and complexity. AI sources describe the framework as a principled yet implementable toolkit that practitioners can plug into existing retrieval and reranking setups with moderate effort, emphasizing its engineering friendliness. Human coverage, when it extrapolates, is more inclined to argue that submodular optimization introduces conceptual and implementation complexity that may be a barrier for typical ML or data engineering teams, implying that its adoption will likely concentrate among more specialized or research-oriented groups unless better tooling and abstractions emerge.

In summary, AI coverage tends to portray Jina AI’s submodular optimization applications as already impactful, technically mature, and central to improving LLM research workflows, while Human coverage tends to treat them as promising but still experimentally situated techniques whose broader significance and practicality remain to be demonstrated.